Claude Code Is Becoming the Operating System for AI Engineering

The era of one-off prompts is ending. The teams pulling ahead are building systems: persistent memory, reusable skills, automated guardrails, and parallel agent workflows.

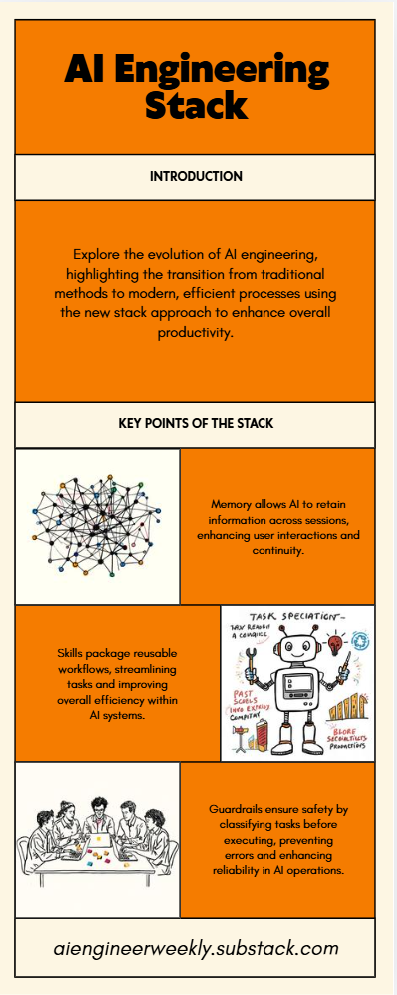

TL:DR - Claude Code is evolving from a coding assistant into a full operating system for AI engineering. The big shift is a four-layer setup: CLAUDE.md for persistent project memory, reusable skills for repeatable workflows, Auto Mode classifiers for governance, and parallel sub-agents for execution. Together, these layers reduce context loss, speed up shipping, and make agent workflows more reliable in production. The takeaway is simple: AI teams are moving beyond clever prompts and toward structured systems. The advantage now comes from building workflows with memory, guardrails, and specialized agent roles — not from using a single model in isolation. Engineers who can design and operate these stacks will be the ones with the biggest edge.

For the last year, most AI engineering has looked roughly the same: write a better prompt, paste more context, hope the model stays on track, and repeat when it drifts.

That model is breaking down.

What is replacing it is not just a better prompt stack, but a new operating model for building with AI. The strongest teams are no longer treating Claude Code like a chatbot that occasionally writes code. They are treating it like an operating system for engineering work — one that combines memory, tooling, governance, and coordinated execution into a repeatable production workflow.

At the center of that shift is a simple but powerful four-layer architecture.

The first layer is persistent context. In this model, every project lives inside a single CLAUDE.md file that acts as shared memory for the system: goals, architecture decisions, current tasks, technical constraints, and the latest working state. Instead of re-explaining the project on every run, the agent starts with a living source of truth. That changes the workflow from “re-prompting” to “continuing.” Context stops being disposable and starts becoming infrastructure.

The second layer is skills. Rather than rebuilding workflows from scratch for testing, security review, UI generation, documentation, or SEO, teams are packaging them into reusable tool packs. The advantage is not just speed. It is consistency. Once a skill is defined well, it becomes an asset the whole team can use again and again without reinventing process every week.

The third layer is governance — and this is where the stack gets serious. The old permission model created friction at exactly the wrong moments: too many interruptions for safe actions, not enough structure for risky ones. The emerging answer is Auto Mode classifiers. Before a tool call runs, a lightweight rule layer decides whether the action should proceed automatically, request approval, or be blocked altogether. In practice, that means sensitive file writes can trigger review, sandboxed execution can happen automatically, and trusted external calls can move without slowing the whole workflow down. Governance stops being a bottleneck and becomes an enabler.

The fourth layer is parallel agents. This is the real leap. Instead of one model handling one giant prompt, teams are spinning up specialized sub-agents across product, engineering, QA, security, DevOps, and operations. These agents work in parallel, communicate through defined channels, and break larger projects into coordinated streams of execution. The result is not just faster output. It is a more realistic reflection of how high-performing teams already work — except now the coordination layer is automated.

Put those four layers together and the pattern becomes clear: memory, skills, guardrails, and agents. That is the new stack.

And it matters because it solves the biggest weakness in agent workflows today: fragility.

Most agent demos look impressive for five minutes. Real production work is different. It demands continuity, repeatability, safety, and the ability to hand work across functions without losing context. A single long prompt cannot do that reliably. A structured operating system can.

That is also why this conversation is moving beyond tooling and into careers. The market is no longer just rewarding people who can “use AI.” It is rewarding people who can design systems around AI: persistent project memory, governed execution, multi-agent orchestration, and measurable operational gains. The differentiator is shifting from prompt cleverness to systems thinking.

So what should builders do now?

Start simple. Create a CLAUDE.md file for your current project and treat it like operational memory, not documentation. Add a small set of reusable skills for the tasks you do every week. Introduce classifier-based rules for anything that touches sensitive files, external systems, or code execution. Then graduate from a single-agent workflow to a parallel team structure where each agent has a clear role and bounded responsibility.

This is the bigger takeaway: the winning teams in AI engineering will not be the ones with the flashiest demos. They will be the ones with the best operating systems.

The prompt was only the beginning. The stack is the future.