PromptOps Is Dead, Long Live SkillOps

The shift from managing prompts to governing skills is the most important ops change in agentic AI — and most teams are already behind.

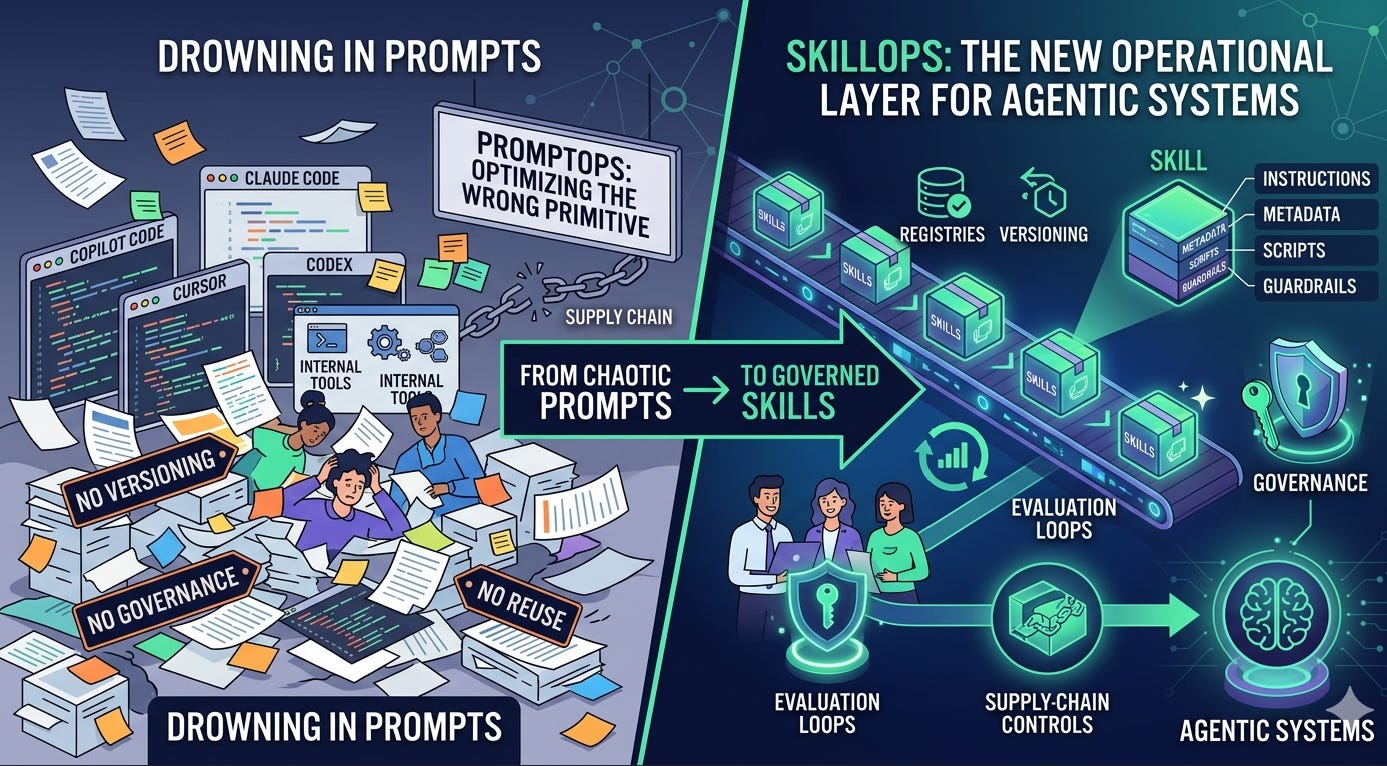

TL;DR - Enterprise teams are drowning in prompts scattered across Claude Code, Copilot, Cursor, Codex, and internal tools — no versioning, no governance, no reuse. The fix isn’t better prompt management. It’s treating skills — self-contained packages of instructions, metadata, scripts, and guardrails — as first-class ops artifacts with registries, evaluation loops, and supply-chain controls. SkillOps — the practice of versioning, evaluating, governing, and composing skills — is the new operational layer for agentic systems. If you’re still doing PromptOps, you’re optimizing the wrong primitive.

The Prompt Sprawl Problem You Already Have

Here’s a pattern across every enterprise customer: someone writes a great prompt for code review in Claude Code. Someone else writes a different one for Copilot. A third person pastes a variation into Cursor. None of them know the others exist. None are versioned. None are tested. When the LLM vendor changes model behavior in an update, all three break silently.

This is PromptOps at its logical endpoint — a graveyard of undiscoverable, untested, ungoverned text blobs. The fundamental problem isn’t tooling. It’s that prompts are the wrong unit of reuse.

A prompt is a string. A skill is an asset.

Skillops

What a Skill Actually Is

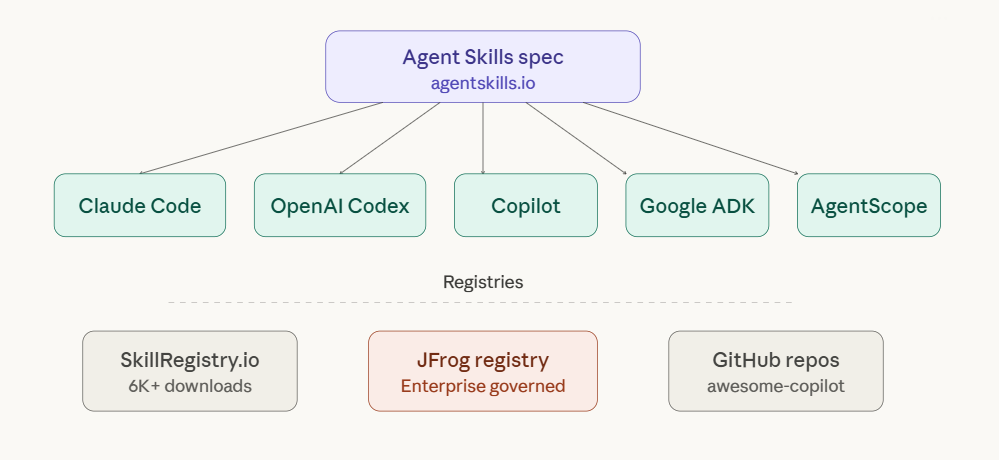

The SKILL.md format — originally published by Anthropic at agentskills.io in December 2025 — has become the de facto standard across every major agentic platform in under six months. Here’s the structure:

my-skill/

├── SKILL.md # Required: metadata + instructions

├── scripts/ # Optional: executable code

├── references/ # Optional: documentation

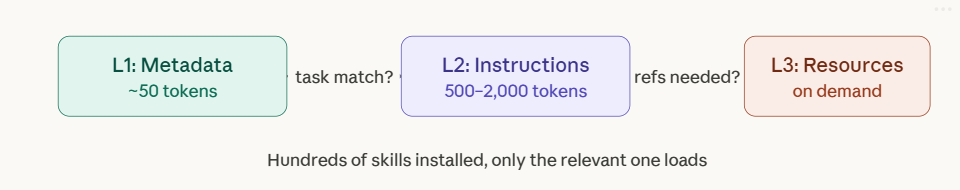

└── assets/ # Optional: templates, resourcesThe SKILL.md file contains YAML frontmatter (name, description) and markdown instructions. That’s it. But the design is deceptively powerful because of progressive disclosure — the mechanism that makes skills scale where prompts don’t.

L1 — Discovery: At startup, the agent loads only the name and description of every available skill. Fifty skills might cost 2,500 tokens total. This is what the agent uses to decide whether to activate a skill.

L2 — Activation: When a task matches a skill’s description, the agent reads the full SKILL.md body into context. Only the relevant skill loads. Everything else stays on disk at zero token cost.

L3 — Execution: If instructions reference scripts, templates, or documentation, those load on demand. A skill can bundle dozens of reference files, but a given invocation might use one.

The result: you can install hundreds of skills with no context bloat. Compare this to PromptOps, where every prompt is always in context or requires manual selection.

The Convergence Nobody Predicted

Six months ago, skills were a Claude Code concept. Today:

Anthropic Claude — Skills across Claude Code, Claude.ai, and the API via the Skills API (/v1/skills endpoints)

OpenAI Codex — Full SKILL.md support with

.codex/skills/directories, implicit and explicit invocationGitHub Copilot — Agent Skills in VS Code with the same SKILL.md format, progressive disclosure built in

Google ADK —

load_skill_from_dirfor file-based skills, meta-skills that generate new SKILL.md files at runtime

This is not each vendor independently inventing a similar format. This is a shared specification at agentskills.io that every major player adopted. A skill built for Claude Code drops into Codex or Copilot with minimal changes. The runtime behaviors differ (session management, tool permissions, invocation modes), but the format is portable.

skills spec

This convergence is the inflection point. It means skills are no longer a platform feature — they’re an interoperable standard. And that changes the operational model entirely.

From PromptOps to SkillOps: What Actually Changes

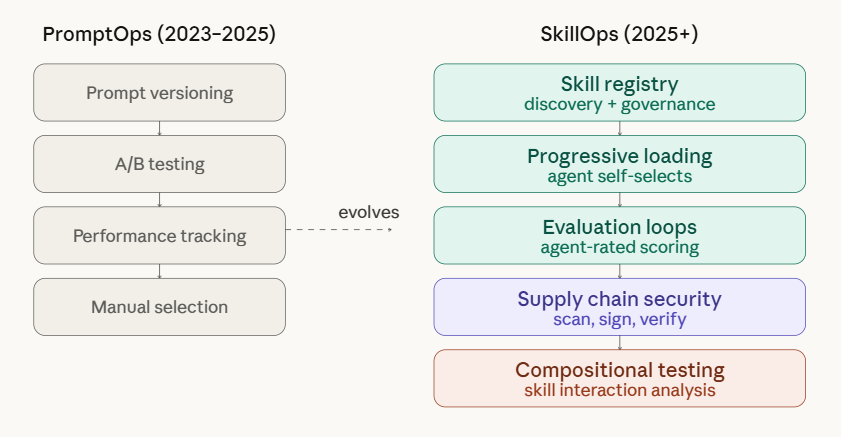

PromptOps treated prompts as the unit of optimization: version them, A/B test them, track their performance. SkillOps treats skills as the unit — but the operational surface is fundamentally different.

…SkillOps

Here’s what each layer means in practice:

Skill Registry — A centralized system of record for all skills across your organization. JFrog launched theirs at NVIDIA GTC in March 2026, positioning it as the trust layer for enterprise agent deployments. SkillRegistry.io serves the open-source community with 61 skills and 6,000+ downloads. The point isn’t which registry you pick — it’s that skills become discoverable, governed assets rather than files someone shared on Slack.

Progressive Loading — The agent decides which skills to use, not the developer. This is the operational shift that kills PromptOps: you stop manually selecting prompts and start trusting that good metadata enables good discovery. Write better descriptions, not better selection logic.

Evaluation Loops — Skills get scored on real tasks by agents. Did the code review skill catch the bug? Did the documentation skill produce accurate output? This is where platforms like LangSmith and Langfuse are moving — from prompt-level tracking to skill-level observability.

Supply Chain Security — JFrog’s core insight: skills are the new packages. An unvetted skill can instruct an agent to exfiltrate data, call unauthorized APIs, or bypass guardrails. Scanning, signing, and policy-driven approval workflows aren’t optional for enterprise deployments. Anthropic’s own documentation warns that skills with external URL fetches pose particular risk because fetched content can contain malicious instructions.

Compositional Testing — The hardest and least solved problem. A “summarize patient record” skill is HIPAA-compliant in isolation. Compose it with a “send email” skill and you’ve got a violation. No major platform has compositional compliance testing today.

The Enterprise Skill Governance Gap

Here’s what I don’t see anyone talking about yet: skills solve the reuse problem but create a governance problem that’s arguably worse than what we had with prompts.

With prompts, governance was simple — there was nothing to govern. Prompts were disposable. Skills are durable, versioned, shared, and composed. They’re organizational IP. And in regulated industries (healthcare, financial services, mortgage), they touch compliance boundaries that current registries don’t model.

JFrog gives you the software supply chain layer — scan, sign, verify. That’s necessary but not sufficient. What’s missing is the requirements traceability layer: the ability to map a skill’s behavior to the specific regulatory obligations it must satisfy, and to detect when skill composition violates those obligations even when individual skills are compliant.

This is the problem I’m working on with the CART (Cloud-AI Requirements Traceability) framework, specifically extending it for agentic systems where execution paths aren’t deterministic and skills compose at runtime. The gap between supply-chain security and regulatory traceability is where the next wave of enterprise SkillOps tooling needs to go.

What You Should Do This Week

If you’re starting from zero: Pick one workflow your team does repeatedly (code review, PR descriptions, incident response). Write a SKILL.md for it. Drop it in .claude/skills/ or .codex/skills/. Test it. You’ll learn more about progressive disclosure and description-writing in an hour than from any documentation.

If you already have scattered prompts: Audit them. Pick the five most-used. Convert each to a skill directory with proper metadata. Commit them to your repo. You’ve just started your skill library.

If you’re operating at scale: Evaluate registry options. For startups, SkillRegistry.io and GitHub repos work. For enterprise with compliance requirements, look at JFrog’s Agent Skills Registry or build an internal registry with the Agent Skills SDK (open-source Python library from Microsoft). Either way, add evaluation loops — track which skills agents actually use and how they perform.

If you’re in a regulated industry: Start thinking about the governance gap now. Current registries handle supply-chain security but not regulatory traceability. Map your most critical skills to the compliance obligations they touch. You’ll want this mapping before auditors start asking for it — and they will.