Shadow AI Agents

Your enterprise has more AI agents than employees. Most don’t have identities, owners, or audit trails. Agent identity is the reliability surface that everything else depends on — and the control plan

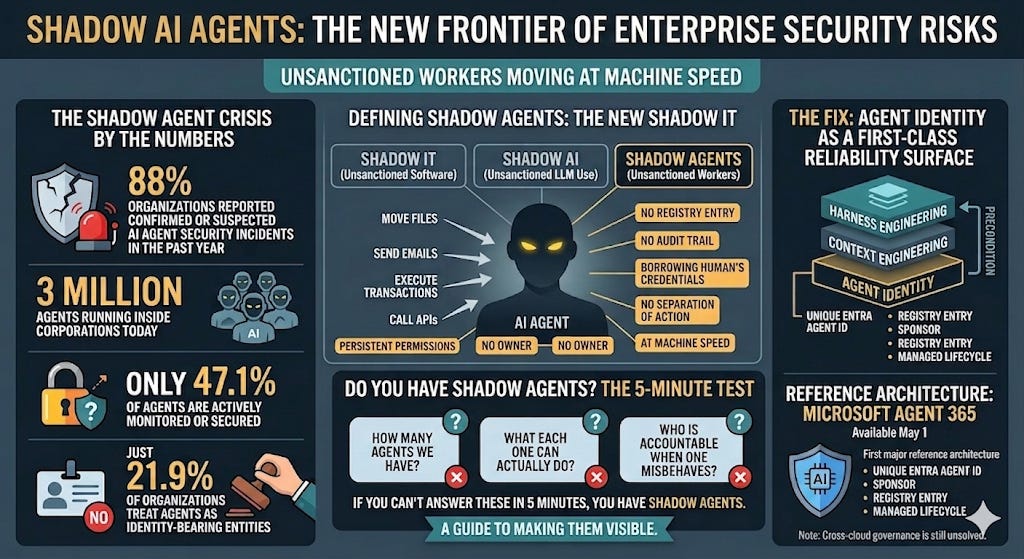

TL;DR - Per Gravitee’s 2026 State of AI Agent Security report, 88% of organizations reported confirmed or suspected AI agent security incidents in the past year. The same survey found three million agents running inside corporations today, only 47.1% of which are actively monitored or secured. Deloitte’s 2026 State of AI in the Enterprise adds that only one in five companies has a mature governance model for agentic AI. The numbers describe a single underlying problem: most enterprise AI agents are shadow agents — autonomous workers with persistent permissions, no owner, no registry entry, and no audit trail. This is shadow IT’s faster, more dangerous successor. Shadow IT was unsanctioned software. Shadow AI was unsanctioned LLM use. Shadow agents are unsanctioned workers — they move files, send emails, execute transactions, and call APIs at machine speed, often borrowing a human’s credentials with no separation of action.

The fix is agent identity as a first-class reliability surface — sitting beneath context engineering and harness engineering as the precondition both rely on. Microsoft’s Agent 365, generally available May 1 at $15 per user per month, is the first major reference architecture: every agent gets a unique Entra Agent ID, a sponsor, a registry entry, and a managed lifecycle. It’s not the whole answer — cross-cloud governance is still unsolved — but it’s the clearest blueprint enterprises have today for what an agent control plane needs to do. If you can’t answer three questions about your environment in five minutes — how many agents we have, what each one can actually do, and who is accountable when one misbehaves — you have shadow agents. This is a guide to making them visible.

The Office Building Analogy

Imagine you walk into your office tomorrow and discover that your company hired forty-five people overnight for every existing employee. They don’t have badges. They report to no one. They have access to your filesystem, email, CRM, customer database, and bank accounts. They never go home, never take vacation, and when something breaks at 3 AM on a Saturday, no one even knows they were there.

Shadow AI Agents

This is not hyperbole. It is the actual ratio. Non-human identities — service accounts, API tokens, robotic process automation, and now AI agents — outnumber human identities in average enterprises by 45 to 1, according to Gartner research, climbing to 80 to 1 in cloud-native organizations. Most operate with excessive privileges. Most run unmonitored. And most are essential to keeping production systems running.

The traditional security playbook was simple: lock down the humans. Enforce MFA. Train employees not to phish. Review badges. The shadow agents problem rewrites the question entirely. The mandate is no longer “who has admin rights?” but “what has access to what?” — and answering that requires infrastructure most organizations have not built yet.

What Shadow Agents Actually Are

Shadow IT was the previous era’s problem. Employees signed up for SaaS tools without IT approval. Procurement found out months later when the renewal invoice landed.

Shadow AI was the bridge. Employees pasted proprietary data into ChatGPT, Claude, or Gemini. The exposure was real but bounded — a single conversation, a single export, a single user.

Shadow agents are categorically different. Unlike shadow AI, which is the use of unapproved LLMs, shadow agents are granted persistent permissions to your systems. They don’t just answer questions. They move files, send emails, update records, and communicate with customers and other agents. They authenticate continuously. They make decisions while no human is watching. And they typically piggyback on a human user’s credentials — which means in your audit logs, the agent’s actions are indistinguishable from the human’s.

When an agent updates a file, the log says “John Doe updated a file.” It should say “John Doe’s Agent [ID 042] updated a file.” That single missing distinction is the source of most attribution failures, most incident response delays, and most of the 88% incident rate Gravitee found in its 2026 State of AI Agent Security report.

The pattern is predictable and already widespread. Marketing deploys an agent for content generation. Sales spins up one for lead scoring. Finance automates invoice processing. Each was approved by a manager who reasonably assumed IT would catch anything risky. IT never sees them, because the agents enter the environment through OAuth grants, browser extensions, MCP integrations, and developer pipelines that no central registry tracks. Six months later the agents are doing critical work. Twelve months later one of them malfunctions and exposes a customer database. The post-mortem reveals nobody knew it existed.

Gravitee’s research puts the steady-state at three million agents operating inside corporations today, of which an estimated 1.5 million are running with no oversight, accessing sensitive data, making decisions, and connecting to critical systems with no audit trail. Gartner expects 40% of enterprise applications to embed task-specific AI agents by the end of this year, up from less than 5% in 2025. IDC projects 1.3 billion autonomous agents in circulation by 2028. None of those agents will govern themselves.

Why Reliability Engineering Alone Doesn’t Solve This

I’ve written extensively about Model Reliability Engineering — the discipline of ensuring AI behavior is reliable in production. MRE has two surfaces: context engineering (what the model knows at inference) and harness engineering (what users see, with what guardrails).

Both surfaces assume something they shouldn’t: that you know which agent is calling the model, whose permissions it carries, and who is accountable if it misbehaves.

Take a faithfulness SLO failure. An agent generates a response unsupported by the retrieved context. MRE tells you the metric fired. It does not tell you which of your 412 agents fired it, which user it was acting on behalf of, what permissions it was operating under, or whether the failure exposed data the agent should never have been able to access in the first place. That investigation requires identity — and most organizations cannot produce it.

Agent identity is therefore not a sibling discipline to MRE. It’s a precondition. Reliability without identity is unauditable. Observability without attribution is theater. You cannot enforce a purpose limitation on an agent whose purpose was never declared. Kiteworks’ 2026 Data Security and Compliance Risk Forecast quantifies the gap directly: 63% of organizations cannot enforce purpose limitations on what their agents are authorized to do, and 60% cannot terminate a misbehaving agent once it starts operating.

This is why agent identity belongs as the next reliability surface — not in addition to context and harness engineering, but underneath them. Without it, the rest of the stack cannot carry weight.

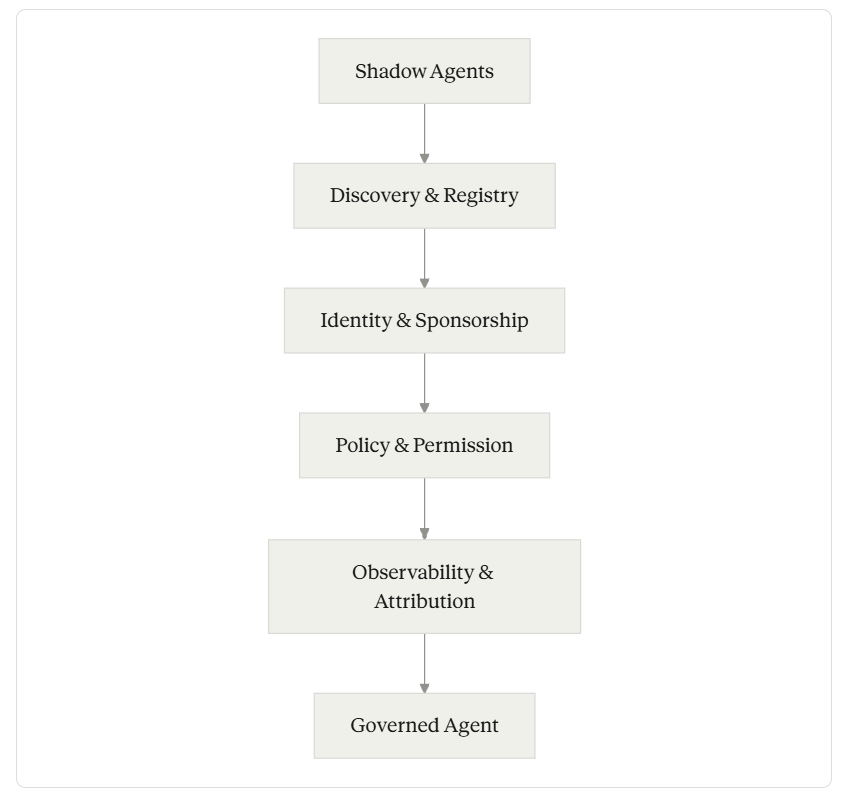

The Four Pillars of an Agent Control Plane

Across the most coherent enterprise frameworks emerging in the last six months — Microsoft’s Agent 365, the Cloud Adoption Framework guidance for agent governance, the OWASP Top 10 for Agentic Applications, and the NIST AI Agent Standards Initiative announced in January 2026 — the same four pillars surface repeatedly. Together they describe what an agent control plane has to do.

Discovery and registry. Every agent in the environment is inventoried. Not just the ones IT sanctioned. The ones running through OAuth grants, browser extensions, MCP servers, low-code platforms, and developer scripts. If you don’t know an agent exists, you cannot govern it. Most organizations cannot produce this list today.

Identity and sponsorship. Each agent receives a unique, durable identifier — distinct from any human user’s credentials. Each identity has a sponsor: a human accountable for the agent’s lifecycle, its permissions, and its decommissioning. Microsoft’s Entra Agent ID is the most concrete implementation of this primitive available today, but the principle is portable: no agent operates without an owner.

Policy and permission. Agents authenticate using short-lived, task-specific tokens, not long-lived shared credentials. Permissions are scoped to least privilege by default. Conditional access policies adapt in real time to risk signals. Purpose limitation is encoded — what the agent is allowed to do, and equally important, what it is not allowed to do, even when prompted to.

Observability and attribution. Every action an agent takes is logged with the agent’s identity, the user it was acting on behalf of, the tools it called, and the data it touched. Behavioral baselines detect drift. Anomalies trigger investigation. When something goes wrong, the audit trail answers “what happened” in minutes, not in days of forensic archaeology.

These four pillars are not novel individually. Identity governance has been a discipline for decades. What is new is applying them to entities that operate continuously, autonomously, at machine speed, with permissions equal to or exceeding privileged human users — and doing so before the agent population grows past the point of practical inventory.

Pillars of an Agent Control Plane

Microsoft Agent 365 as the Reference Architecture

Agent 365, generally available May 1, 2026, is the most complete implementation of these four pillars shipping today. It deserves attention not because it is the only solution but because it is the first concrete blueprint enterprises can point to and copy.

The Agent 365 inventory in the Microsoft 365 admin center captures every agent registered through Microsoft channels — Copilot Studio, Microsoft Foundry, Teams, and third-party agents that integrate via the Agent 365 SDK. Microsoft Entra issues each agent a unique Agent ID and applies identity governance: lifecycle controls, conditional access, sponsor relationships, and access packages. Microsoft Purview applies data protection policies and audits agent activity. Microsoft Defender provides threat detection and incident response, with visibility into attack paths.

Microsoft is its own first proof point. The company has been running Agent 365 internally as “Customer Zero” and reports more than 500,000 agents mapped within its own environment, generating more than 65,000 responses per day for employees in a representative 28-day window. In the public preview phase, tens of millions of agents have been registered in the Agent 365 registry across customer environments. The control plane has been load-tested before launch.

Worth understanding what Agent 365 does not solve. Its strength is also its boundary: it is anchored to the Microsoft ecosystem. Agents running in AWS Bedrock, GCP Vertex, OpenAI’s platform, Anthropic’s API, GitHub Actions, or internal frameworks built on LangChain or CrewAI do not automatically appear in the Agent 365 registry. Cross-cloud governance still requires configuration or third-party tooling. Several aspects of the security story are also incomplete on day one — runtime threat protection through the Agent 365 tools gateway is entering public preview in April rather than shipping at GA, and security posture management for Foundry and Copilot Studio agents remains in public preview after launch.

Agent 365 is the most coherent reference architecture today, but it is one path among several. To pick well, architects need the broader landscape.

The Control Plane Is a Category, Not a Product

Microsoft is not alone in this space. As of mid-2026, six distinct categories of vendor are racing toward the same control-plane primitives, with overlapping and sometimes conflicting approaches.

Hyperscaler-native control planes. Each major cloud is building its own version of Agent 365. AWS Bedrock AgentCore added a managed Agent Registry in April 2026, with identity, gateway, sandboxed runtime, observability, and a policy module that runs outside the agent. VentureBeat’s framing of the difference is sharp — AWS optimizes for build-velocity, with identity baked into the runtime layer rather than sitting on top. Google rebranded Vertex AI as Gemini Enterprise Platform and built a Kubernetes-style governance control plane around it, with Agent Registry integrations via Apigee, plus VPC Service Controls, CMEK, and a new Vertex AI Governance layer. Three hyperscalers, three philosophies, each bound to its own ecosystem. Forrester analyst Charlie Dai flagged the corollary risk: enterprises adopting AWS, Microsoft, and Google registries in parallel could end up recreating the exact fragmentation these tools are meant to solve. Registry sprawl is the second-order failure mode of the control-plane era.

The neutral identity-fabric play. Okta plus Auth0 is the most ambitious cross-ecosystem competitor. Okta for AI Agents entered Early Access in March 2026; Auth0 for AI Agents handles the build-time identity primitives — Token Vault, Fine-Grained Authorization for RAG, CIBA for asynchronous human consent. The strategically important move is Cross App Access (XAA), an OAuth extension built specifically for agent-to-application delegation, with launch support from AWS, Google Cloud, Salesforce, Box, Glean, and others. XAA was recently merged into MCP as “Enterprise-Managed Authorization.” If XAA becomes the actual interoperability standard, it matters more than any single vendor’s control plane. Strata Identity’s Maverics Agentic Identity is a similar pure-play approach, with just-in-time provisioning and OIDC/OAuth subject-actor binding.

Non-human-identity vendors. Entro Security, TrustLogix, BeyondTrust Pathfinder, CyberArk, GitGuardian, Keeper, and AppViewX with Eos came from privileged access, non-human identity, or secrets management and extended into agents. BeyondTrust Pathfinder is the closest a non-hyperscaler comes to a true unified control plane, combining PAM, CIEM, ITDR, secrets management, and agentic AI security in a single telemetry layer. Their thesis is the cross-environment one: agents do not respect ecosystem boundaries, so neither should governance.

IGA retrofit. Saviynt shipped ISPM for AI Agents and ISPM for NHI in early 2026. SailPoint and others are extending traditional identity governance to agents. “Extending” is the operative word. This is the retrofit path, with the trade-offs that implies.

Cross-cloud data-policy layer. Bedrock Data’s ArgusAI sits adjacent to identity, governing what data agents can access across AWS Bedrock, Snowflake Cortex, ChatGPT Enterprise, and Google Vertex AI. Write a policy in plain English once, enforce it across clouds. Identity governance and data governance are converging.

The open-standard foundation few are pointing to. SPIFFE/SPIRE — CNCF-graduated, production-proven for workload identity in cloud-native environments, integrated natively into HashiCorp Vault Enterprise as of version 1.21, shipping as a Red Hat OpenShift operator. SPIFFE was not built for AI agents specifically, but it solves precisely the right problem: short-lived cryptographic identities for non-human workloads, attested by what the workload is rather than what secret it holds. Most enterprise architects have not connected SPIFFE to agent governance yet. They should. For platform-agnostic, multi-cloud agent identity, SPIFFE/SPIRE is the most mature and standards-aligned foundation available — and it composes cleanly underneath any of the higher-level control planes above.

Practical guidance breaks down by deployment shape. Heavily Microsoft stacks should default to Agent 365 at $15 per user per month standalone, or included in the new M365 E7 bundle at $99, as the path of least resistance. Heavily AWS or Google deployments should look at AgentCore Registry and Gemini Enterprise’s governance layer respectively as the analogous bets, with the same architectural pattern and same ecosystem boundary. Multi-cloud organizations need Okta plus Auth0’s identity fabric or one of the NHI-pedigree platforms — BeyondTrust Pathfinder, Entro, TrustLogix — for cross-environment governance that hyperscaler-native tools cannot deliver. Cloud-native shops running Kubernetes and a service mesh should evaluate SPIFFE/SPIRE as the open-standard foundation that composes underneath any of the above. Teams still early, with fewer than a dozen agents in production, should build identity in from day one rather than retrofit it later. The shadow agents problem is what retrofit looks like at scale, and the cost grows by an order of magnitude with every doubling of agent population.

A Three-Question Diagnostic

Before any tooling decision, every organization running agents should be able to answer three questions in under five minutes. The number of “no” or “I’m not sure” responses correlates directly with shadow agent exposure.

How many AI agents are running in our environment right now? Not the ones IT approved. The total — including the ones spun up via OAuth grants, browser extensions, MCP integrations, and developer scripts. Most organizations cannot answer this within an order of magnitude.

What can each agent actually do? Not what it was designed to do. What permissions does its token carry, what systems does it have read access to, what systems does it have write access to, and what would happen if a malicious prompt convinced it to use the broadest interpretation of its access? The 63% of organizations that cannot enforce purpose limitations are by definition unable to bound this.

Who is accountable if an agent misbehaves at 3 AM on a Saturday? Not “the team that built it.” A specific human, on call, with the authority to decommission the agent. If the answer requires a meeting to determine, the agent has no owner.

Three “no’s” means a major incident is a question of when, not if. The organizations that will survive the next 24 months of agent adoption without a public incident are the ones that can answer all three today, with names, numbers, and pages.

The Bottom Line

Agent adoption is moving faster than identity governance. Forty percent of enterprise applications embedding agents by year-end is not an adoption curve — it is a vertical line. The 1.3 billion agent projection by 2028 means that within two years, autonomous non-human workers will outnumber every other class of digital identity inside the enterprise.

The organizations that treat agent identity as a first-class reliability surface — with discovery, sponsorship, scoped permissions, and audit-grade observability — will spend the next two years building production capability. The organizations that don’t will spend them doing post-incident forensics on agents they didn’t know they had.

Reliability begins with identity. If you cannot tell who acted, you cannot tell what happened. If you cannot tell what happened, you cannot fix it. Everything else in the agent stack — context engineering, harness engineering, evaluation, incident response — assumes that question is already answered.

It usually isn’t. That’s the work.

fyi

The AI that knows

vs.

The AI that believes

https://leebloomquist.substack.com/p/the-ai-that-knows-vs-the-ai-that?utm_campaign=post-expanded-share&utm_medium=web

Autonomous workers with persistent permissions and no registry or audit trail is exactly the enterprise security failure mode nobody is designing for proactively — companies are discovering shadow agents the same way they discovered shadow IT, after something goes wrong. The permission persistence issue is particularly sharp because agent sessions are often long-lived in ways human sessions aren't. Do you think the governance gap drives a new category of agent observability tooling, or is it more likely that the hyperscalers absorb the problem into their existing IAM infrastructure? Writing about the builder side of agentic risk at theaifounder.substack.com.