The Vercel Breach RCA: Agent Identity Is the New Attack Surface

One OAuth grant, one compromised AI vendor, one platform breach. Every team deploying agents shares the same architecture.

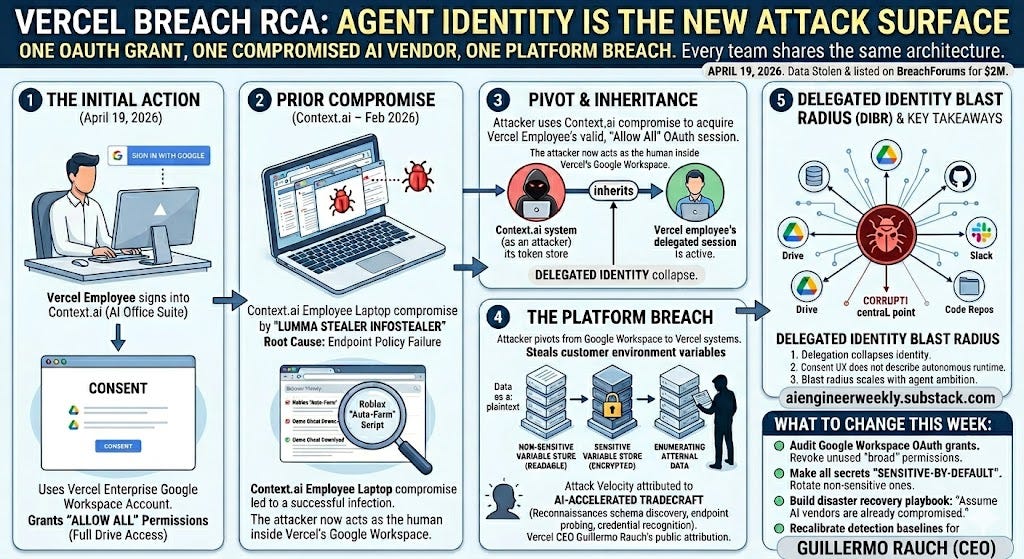

TL;DR - On April 19, 2026, Vercel disclosed a breach of its internal systems. The root cause wasn’t a zero-day, a supply chain poisoning of an npm package, or a perimeter failure. It was an OAuth grant — a Vercel employee signed into Context.ai, a 300-connector agentic “AI office suite,” using their Vercel enterprise Google Workspace account and granted “Allow All” permissions. Context.ai was already compromised from a February 2026 infostealer infection on an employee laptop. The attacker inherited that OAuth session, pivoted into Vercel’s Google Workspace, and enumerated customer environment variables that were stored in plaintext-recoverable form because they weren’t explicitly marked “sensitive.” Vercel CEO Guillermo Rauch publicly attributed the attacker’s “operational velocity” to AI-accelerated tradecraft. Stolen data was listed on BreachForums for $2M. The mainstream framing — “shadow AI,” “third-party risk,” “OAuth supply chain” — is correct but incomplete. The right framing for AI engineers: this is the first major platform breach where an AI agent holding delegated identity was the pivot point. Every agent, every MCP server, every AI productivity tool your team is shipping or consuming runs on exactly this pattern. If you operate agents, audit your OAuth grants this week, default-sensitive every secret you store, and stop treating agent vendors as if they were ordinary SaaS.

What actually happened

Here is the compressed attack chain, reconstructed from Vercel’s bulletin, Context.ai’s advisory, Hudson Rock’s infostealer analysis, and Trend Micro’s post-incident writeup.

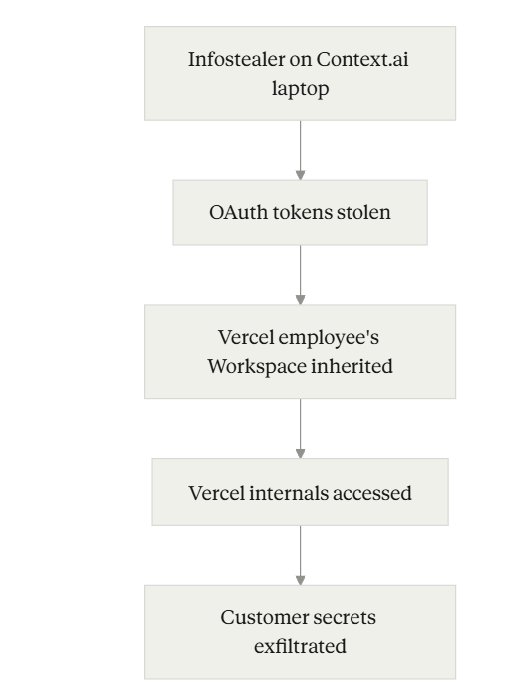

Attack chain

Each hop is worth pausing on.

The initial compromise was human, not technical. According to Hudson Rock’s analysis, the Context.ai employee’s browser history showed active searches for Roblox “auto-farm” scripts — a classic Lumma Stealer distribution vector. An enterprise SaaS vendor’s entire security posture was compromised because one employee downloaded game cheats on a corporate laptop. This is a failure of endpoint policy, not crypto or architecture.

The pivot was an OAuth grant, not a credential theft. Context.ai’s own statement is worth reading carefully: Vercel wasn’t even a Context.ai customer. A single Vercel employee had signed up for the product using their Vercel enterprise Google account and granted full read access to Google Drive during onboarding. When Context.ai’s OAuth token store was compromised, the attacker acquired not a password, but a delegated session — the authority to act as that employee inside Vercel’s Google Workspace.

The blast radius was set by Vercel’s “sensitive vs. non-sensitive” environment variable model. Vercel encrypts all env vars at rest. But it has a distinction: env vars marked as “sensitive” are stored such that they cannot be read back even by the platform itself; non-sensitive env vars can be decrypted to plaintext for display in dashboards. The attacker couldn’t touch sensitive vars. Everything else — API keys, database credentials, signing keys that customers had never opted into the sensitive treatment — was readable by enumeration.

The velocity was the tell. Rauch’s public claim is that the attacker moved fast enough, with enough understanding of Vercel’s internal structure, that AI augmentation is the most likely explanation. This is interpretive — attribution-by-velocity is not a forensic artifact — but it lines up with a pattern Trend Micro, Microsoft, and others have flagged across 2026: LLM-driven reconnaissance that parallelizes schema discovery, endpoint probing, and credential-format recognition at rates that break detection baselines calibrated to human attackers.

Breach RCA

Why the standard framings are incomplete

The Vercel breach is getting framed three ways in the security press. All three are partially right and all three miss the point for AI engineers.

Framing 1: “Third-party risk / shadow AI.” True. But this framing leads to the wrong remediation — better vendor questionnaires, annual SOC 2 reviews, procurement gates. None of that would have prevented this. Context.ai likely had SOC 2. A Vercel employee signed up as a consumer, bypassing procurement entirely. Point-in-time vendor assessments are worthless against active compromise.

Framing 2: “OAuth supply chain attack.” True. But OAuth supply chain attacks have been understood for years — Codecov, CircleCI, the Heroku/Travis CI incident. What’s new here isn’t the OAuth mechanism. It’s the category of vendor on the other side of the grant.

Framing 3: “Platform env var model needs defaults.” True. Vercel has already rolled out dashboard changes and is pushing customers toward the sensitive-variable feature. This is good, and every platform should copy it. But this is a Vercel-specific lesson, not an industry-wide one.

The framing that actually matters for AI engineers is the one none of these capture: the intermediary in this breach was an AI agent holding delegated identity, and the pattern that made it dangerous is the pattern every agent deployment replicates.

Context.ai markets itself as an agent platform. Per their own launch materials, its agents “dynamically traverse entire organizational knowledge bases.” To do that well, it needs broad, persistent access to Drive, Slack, email, code repos — and it acquires that access through long-lived OAuth grants from individual users. This is not a Context.ai pathology. It’s the architectural baseline for every agentic product shipping today: Cursor’s enterprise connectors, Glean’s agents, the exploding MCP server ecosystem, every “connect your Google Drive” button in every AI startup demo.

When the agent is compromised, the delegated identity is compromised. When the delegated identity is an enterprise Google Workspace account, the compromise propagates to everything that account can touch.

A useful handle: Delegated Identity Blast Radius

A shorthand for this pattern, which I’ll use for the rest of the piece: Delegated Identity Blast Radius (DIBR) — the scope of systems an attacker inherits by compromising an agent, equal to the union of all permissions granted to that agent across all delegating users and tenants.

DIBR has three properties that distinguish it from pre-agent OAuth risk.

1. Delegation collapses identity. A traditional SaaS integration might hold a scoped API key for “read Slack messages.” That’s a credential, and it’s bounded. An agent holding an OAuth grant with “Allow All” on Drive doesn’t hold a credential — it holds a session. If the agent’s vendor is compromised, the attacker is now the human. They can read everything the human can read, compose everything the human can compose, move laterally through every system the human’s SSO has reach into. The credential/identity distinction that security teams rely on stops working at the agent boundary.

2. Consent UX was never designed for agents. OAuth scopes describe what an app can do at authorization time. They don’t describe what an autonomous agent will do at runtime. A user approving “read your Drive” is not meaningfully consenting to “this agent will read your Drive, reason over every document, and potentially generate outputs that contain exfiltrated content.” Google’s own consent screen shows a list of scopes, not a behavioral model. In the Vercel case, Context.ai’s onboarding asked for Drive read access — exactly what the product needs to function. Nothing about the consent flow would flag this as risky. The scope was honest. The runtime behavior was the risk.

3. Blast radius scales with agent ambition. The more capable the agent, the worse the breach. A narrow AI — say, a meeting summarizer that only touches calendar events from the last 48 hours — has a bounded DIBR. A “universal office suite” agent marketed as being able to understand everything about how your organization works has, by design, maximal DIBR. The product’s value proposition and its worst-case blast radius are the same vector. Context.ai’s sales pitch — 300 connectors, cross-tool reasoning, organizational memory — is also a perfect description of its breach impact.

This is the uncomfortable part: you cannot reduce DIBR without reducing agent capability. The only knobs are scope minimization, token lifetime, and vendor security posture — and all three trade off against the reason you bought the agent in the first place.

This is not a Vercel problem. It’s an agent-era problem.

The instinct right now is to look at the Vercel incident and ask: “What did Vercel do wrong, and how do I avoid being Vercel?” That’s useful but it’s the wrong axis. Vercel’s specific mistakes — non-sensitive-by-default env vars, enterprise Google Workspace OAuth config permissive enough to allow broad grants — are patchable and already being patched.

The unpatchable part is structural. Right now, across the AI ecosystem:

Millions of developers have connected OpenAI, Anthropic, and other API keys to Cursor, Continue, Claude Code, Zed, and dozens of other AI coding tools — in many cases through OAuth to their GitHub identity, not just a local API key.

Every “connect your Google Drive” AI product demo creates a long-lived OAuth grant. Most of those grants are never revoked, never rotated, and never audited.

The Model Context Protocol (MCP) ecosystem is accelerating the pattern: MCP servers are effectively generalized delegation endpoints, and the current norm is to trust them implicitly because they run “locally” or “in the enterprise.”

Agentic IDE integrations — the kind that autonomously read, edit, and commit across an entire codebase — hold scopes that would horrify a security auditor if they were attached to a human service account.

Every one of these is a future Context.ai, waiting for its Lumma Stealer moment. The attack pattern is replicable. The defenses, so far, are not standardized.

There are two structural responses.

Product-side (if you build agent tools): Default to the narrowest scope that lets your product demo, not the scope your product’s full feature set needs. Expose scope minimization as a first-class UI element — “Context.ai full access” versus “Context.ai research only” — so users can make real trust decisions. Short-lived tokens with explicit re-authorization for high-impact actions. Invalidate tokens on any vendor-side incident, not just on user-triggered rotation. Publish an incident response SLA for token compromise.

Deployment-side (if you ship software that depends on agent vendors): Treat every agent vendor’s breach as your breach. The Vercel env var issue isn’t unique — audit whether your platform’s secret store is sensitive-by-default or sensitive-by-opt-in, and switch the defaults. Build a disaster recovery playbook for “assume our primary AI vendor is compromised right now.” Most teams don’t have one. The ones that will survive the next incident in this category are the ones that already wrote it.

What to change this week

If you’re reading this and asking “OK, what do I do Tuesday morning” — here is the ordered list. This is the most concrete thing in the piece, so don’t skip it.

1. Audit your Google Workspace OAuth grants right now. In admin.google.com → Security → Access and data control → API controls → App access control. Export the full list. For every app, check the scopes. The Secure Annex researcher John Tuckner put it sharply: spend a week asking yourself which scopes you’ve allowed and whether you recognize all the services. Most teams have never done this exercise and are shocked by what comes back.

2. Identify every OAuth grant with “broad” or “Allow All” scopes on Drive, Mail, or Calendar. These are your highest-DIBR connections. Revoke the ones you don’t actively use. For the ones you keep, set a calendar reminder to re-audit quarterly. Treat “broad Drive access” as a permission on par with production database access, because in breach terms it is.

3. Check whether your platform’s secrets are sensitive-by-default. Vercel’s model — sensitive is opt-in — is common. Netlify, Render, Railway, and Fly.io all have variations on this pattern. Go into your secret store, identify every non-sensitive secret that carries production access, and either rotate-and-mark-sensitive or move to a dedicated secrets manager (AWS Secrets Manager, GCP Secret Manager, Doppler, Infisical, 1Password).

4. If you ship an agent product, publish your scope minimization story. This is both a security posture and a differentiation opportunity. Buyers in 2026 are going to start asking “what happens when you get breached” — teams that have a good answer will win. Teams that don’t, won’t.

5. If you run agents in production, assume the AI vendor is already compromised and plan the blast radius. The exercise: pick your most-connected agent. Write down every credential, scope, and system it touches. Imagine you wake up tomorrow to a vendor breach disclosure. Which secrets rotate first? Which systems need re-authorization? Which customers need notification? If this exercise takes more than four hours, you don’t have a runbook.

6. Recalibrate your detection baselines for AI-accelerated enumeration. If your SIEM alerts are tuned to “human-paced” attacker behavior — unique resource enumeration rate, error-to-success ratio recovery — they may under-alert against AI-augmented operators. Trend Micro’s writeup has specific guidance on thresholds to revisit. This is worth a security team afternoon.

What to watch

Two questions will shape the next six months.

Will any OAuth provider ship “agent consent” as a distinct flow? Google, Microsoft, and Okta all have the signal that agent grants are different in character from traditional app grants. What the ecosystem needs is a new consent primitive — something like a “delegated agent session” with mandatory short lifetime, mandatory re-authorization for high-impact actions, and a scope model expressive enough to describe runtime behavior, not just capability surface. The first provider to ship this will reset the security baseline for every agent product downstream.

Will platform providers make sensitive-by-default the standard? Vercel is clearly moving that direction post-incident. If competitors follow, the industry gets safer. If they don’t, Vercel customers end up paying a security tax while customers of other platforms keep eating the old default. Watch the next 60 days of product announcements from Netlify, Render, and Cloudflare.

The Vercel breach is going to be cited for years. Not because the technical details are novel — they mostly aren’t — but because it’s the first high-profile case where the intermediary was an AI agent holding delegated identity, and the ecosystem reaction will set precedent for how we treat agent vendors from here on.

If you’re building agents, you have a few months to fix your defaults before someone else’s breach becomes your problem. Use them.