The Brain Isn’t the LLM: How HockeyStack Built Revenue Agents

HockeyStack just raised $50M to scale a vertical agent platform whose reasoning engine is a custom ML pipeline — not a frontier model. Why that matters for anyone building agents.

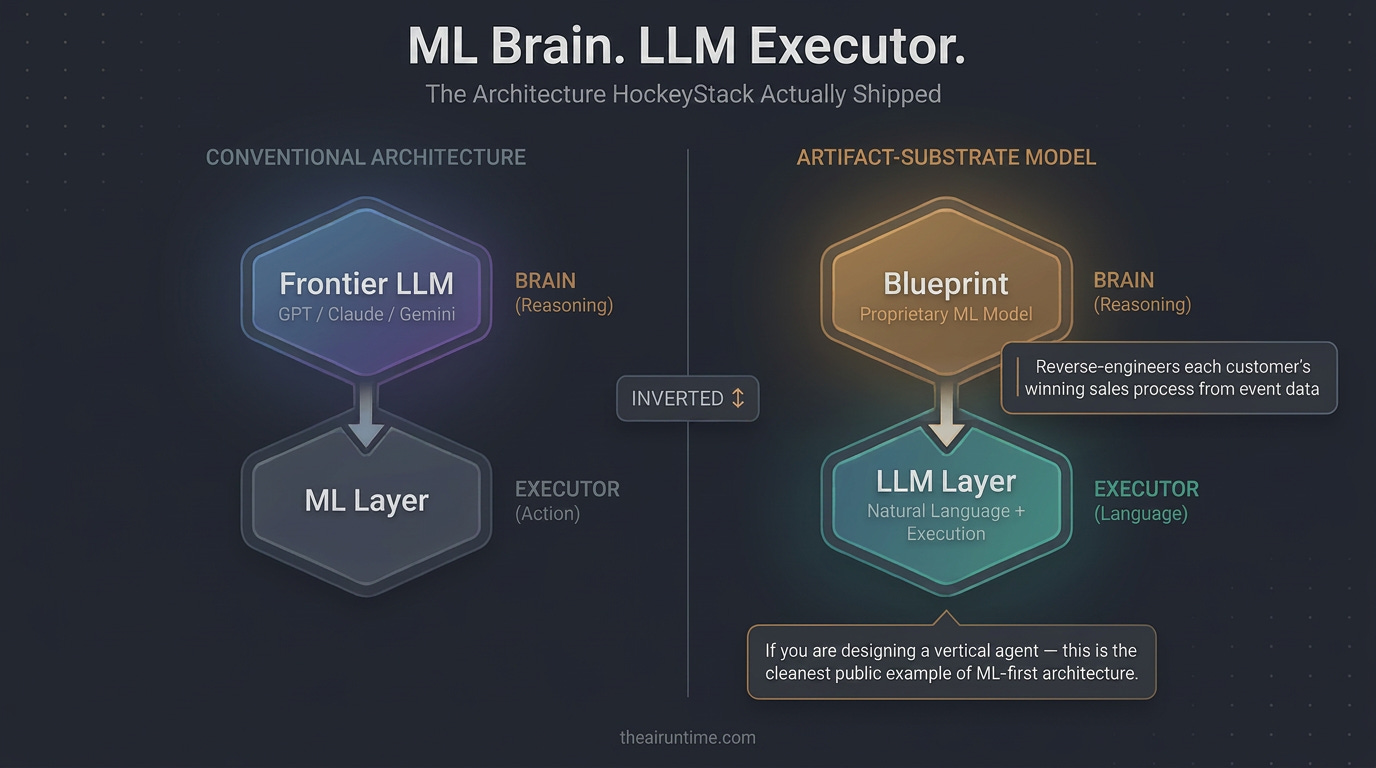

TL;DR - HockeyStack closed $50M from Bessemer Venture Partners, Y Combinator, and Uncorrelated Ventures to scale Revenue Agents — autonomous AI agents that work every deal and account 24/7 across new business, prospecting, and expansion. The interesting architectural choice: HockeyStack’s reasoning engine is not a frontier LLM. It is a proprietary ML model called the Blueprint that reverse-engineers each customer’s winning sales process from their event data. The LLM sits downstream as the execution and natural language layer. If you are designing a vertical agent, HockeyStack is the cleanest public example of an “ML brain, LLM executor” architecture — the inverse of what most teams ship.

What HockeyStack Actually Sells

HockeyStack started in 2021 as a B2B revenue analytics and attribution platform — the kind of tool that stitches Salesforce, HubSpot, ad platforms, Gong, and product data into one buyer journey so a CMO can answer “which campaign actually drove pipeline?” The founders — Emir Atlı, Arda Bulut, and Buğra Gündüz, the CEO — dropped out of college in Turkey, went through Y Combinator, and built the company into a Series A attribution vendor.

That is the company HockeyStack used to be. The company they are now is something different.

In April 2026, HockeyStack announced a $50M raise and the launch of “Revenue Agents for the Enterprise.” The pitch: per-deal autonomous agents that monitor every live opportunity against a learned pattern of how the customer’s own top reps win, execute the next-best action, and loop in the human rep when judgment is required. The customer list spans Fortune 100 revenue teams including 8x8, AppsFlyer, Outreach, Yext, and Sendoso, with over 300 customers reached in under two years.

This is a category bet: HockeyStack is positioning Revenue Agents as a new product category sitting alongside (or above) attribution, CRM, and revenue intelligence. The bet is architectural, and it is the part worth studying.

The Blueprint Is the Brain

The single most useful sentence on HockeyStack’s site is in their description of the platform: agents follow a “validated, data-grounded process.” Read past the marketing voice and notice what is not being claimed. The agent is not reasoning from first principles each turn. It is not asking an LLM “what should I do next on this deal?” and trusting whatever comes back. It is executing against a blueprint — a learned, structured representation of the customer’s winning sales process.

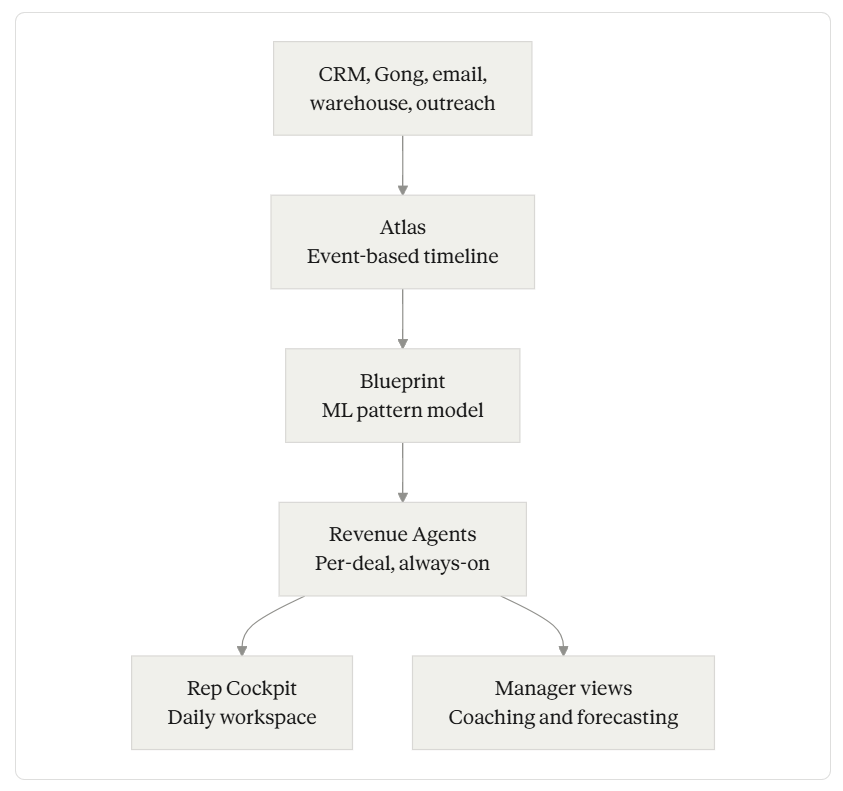

The Blueprint is HockeyStack’s proprietary ML model. Per their own description, it is built by analyzing every won and lost deal, every touchpoint, and every signal in the customer’s data to surface specific, validated patterns. Each Blueprint is unique to a revenue motion or business unit and updates continuously as new deals close and market conditions shift.

Crucially, the Blueprint is not a fine-tuned LLM. It is described as a machine learning model that continuously learns on new outcomes — an event-chain pattern-mining pipeline trained on the customer’s own deal history. The LLM enters the picture downstream: surfacing tasks in natural language to reps, generating outreach copy, and handling the human-facing surface. The reasoning about what should happen on a deal is the Blueprint’s job.

This inverts the dominant pattern in AI agent products. Most “AI for X” startups treat a frontier LLM as the reasoning engine and bolt on retrieval, tools, and memory around it. HockeyStack treats a domain-specific ML pipeline as the reasoning engine and uses the LLM as the execution and language layer.

Detail belongs in the prose, not the diagram. Three components carry the real weight.

Atlas: The Event-Based Substrate

Most CRMs are record-based: a deal is a row, with fields. HockeyStack’s foundation, called Atlas, is event-based: every interaction is a timestamped event resolved to one identity graph. Per their own product page, Atlas unifies every interaction into a single event-based timeline with full identity resolution — CRM, outreach sequences, call recordings, web activity, and the data warehouse all resolved to one time-stamped source of truth.

This matters because the Blueprint cannot mine winning patterns from flattened CRM fields. As contentgrip’s coverage of the raise observed, many meaningful buyer and seller signals are inherently event-like — web activity, product usage, conversation outcomes, buying-committee changes — and when those signals get flattened into static fields, teams lose the sequence, timing, and causality that define a winning play. An event-based model preserves them.

For builders, the lesson is upstream of agent design: if your reasoning layer needs sequence and causality (and most consequential agent decisions do), your data layer has to preserve them. You cannot retrofit event semantics onto a record-based store after the fact without losing fidelity.

Revenue Agents: Per-Deal, Always-On

The agent layer is where the Blueprint gets executed. HockeyStack’s framing: dedicated agents monitor every deal and account, execute the right moves autonomously, and flag risks, with individual Revenue Agents assigned to each deal and account, operating around the clock.

Concrete agent behaviors HockeyStack has shipped, per their agents page: identifying missing stakeholders and triggering outreach to unblock deals, detecting competitor dissatisfaction signals and launching displacement outreach, redistributing account attention based on revenue risk, and identifying when messaging stops converting. Each behavior is an instance of “deal deviates from the Blueprint pattern → agent acts.”

The reps interact with this through a surface called the Rep Cockpit — a daily workspace where agents surface direct tasks with reasoning. Senior leaders get separate Manager views for coaching and pipeline forecasting. This shape — agent surfaces work, human reviews and acts — is the same shape Rogo’s Felix uses with email as the substrate. Different surface, same async-handoff pattern.

HockeyStack also describes a multi-agent orchestration model: one agent retrieves data, another runs analysis, a third validates the output before the user sees it. The validator step is doing real work — it is the guardrail that catches the LLM hallucinating a stakeholder or fabricating an account fact before that error propagates into a rep’s outreach.

The Reverse-Engineering Bet

There is a strong claim underneath all of this, and HockeyStack states it plainly: your top performers run plays that live in their heads, and the Blueprint finds and deploys them across your entire team. The bet is that “what your best rep does” is a pattern recoverable from the event stream — not just tribal knowledge.

This is non-obvious. Sales has been resistant to standardization because the tacit-to-explicit conversion loses something. Whether HockeyStack’s pattern mining actually captures what the best reps do, or just captures the surface signals correlated with their wins, is the empirical question that will determine whether this category sticks. As one industry analyst noted in coverage of the raise, enterprises will look for clear proof that an event-based architecture improves forecast accuracy, sales productivity, or expansion conversion — not just that it produces more data. That bar has not been independently proven yet.

But it is the right bet to be making. If the architecture works, the moat is significant: every customer’s Blueprint is a one-of-one asset trained on their data, hard to rip out, and gets better as it ingests more deals.

Two Architectures for Vertical Agents

It is worth naming the two patterns explicitly, because they map cleanly onto a choice every vertical-agent builder is now making.

Pattern A — Frontier LLM as brain, harness around it. The reasoning engine is a frontier model. The vertical work is in the harness: tool layer, evals, output formatters, audit trail, data integrations. When a better frontier model ships, you swap the engine. Examples: most agentic platforms today, including the agent harness several finance and legal AI companies have publicly described.

Pattern B — Domain ML as brain, LLM as executor. The reasoning engine is a custom ML pipeline trained on customer data. The LLM handles natural language interfaces, generation, and tool calling. The vertical work is in the data pipeline, the pattern model, and the per-customer training loop. HockeyStack is the clearest public example.

Neither is universally right. Pattern A is faster to ship, benefits automatically from frontier-model gains, and is easier to swap. Pattern B is more defensible if your domain has rich event data and recoverable patterns, and it gives you deterministic behavior the LLM cannot match.

In Model Reliability Engineering terms: Pattern A invests heavily in Harness Engineering. Pattern B invests heavily in Context Engineering, taken to its logical extreme — the context isn’t just retrieved, it’s mined and structured into a deterministic decision pattern before the LLM ever runs.

What’s Actually Being Transformed

Sales orgs do not get replaced; their middle gets compressed. The classic problem HockeyStack is targeting — the best rep closes 2-3x more than the median, and nobody knows why — has been a fixture of sales leadership for thirty years. The traditional response was process documentation, MEDDIC training, and rep shadowing, and it did not close the gap because tacit knowledge resists capture.

If Revenue Agents work as advertised, what changes is not headcount; it is the variance band. New reps execute closer to top-quartile from week one because the agent surfaces the next move. Top reps spend less time on context-stitching (one HockeyStack customer testimonial cites three hours a day of cross-tool data wrangling eliminated, though this is vendor-curated and worth treating as directional rather than benchmarked) and more time on the relationship work that actually requires a human. Managers run pipeline reviews against a model rather than vibes.

The honest caveat: this is the promise. As of April 2026, the public evidence is the customer list, the funding round, and HockeyStack’s own product descriptions. Independent benchmarks of forecast-accuracy lift or expansion-conversion lift do not yet exist publicly. Buyers in this space should ask for them.

Five Lessons If You Are Building a Vertical Agent

Decide which brain you are building. Pattern A and Pattern B are different companies with different moats. Pick deliberately, not by default.

Event-based data preserves causality. Record-based data destroys it. If your agent needs to reason about why something happened, your substrate has to keep the sequence.

The validator agent is doing real work. Multi-agent orchestration with a dedicated check step is a cheap way to cut hallucination risk before output reaches the user.

Per-customer learning is a moat. Per-customer training is hard. A model that gets better as the customer uses it is structurally defensible — but only if you can run that loop without ongoing human curation.

Async surfaces beat new UIs. HockeyStack’s Rep Cockpit and Manager views, like Rogo’s email interface, surface agent work where the user already lives. Adoption follows the path of least friction.

What to Do This Week

Pick a workflow you have watched a domain expert do — one with rich, structured signals leading up to the decision. Now ask: could a small ML model trained on past instances of this workflow predict the right next action better than an LLM prompted with the same context?

If yes, you have a candidate for Pattern B. The investment is in the data pipeline and the model, not the prompt.

If no — if the signals are sparse, unstructured, or judgment-dominated — you are in Pattern A territory, and your work is in the harness around the frontier model.

The mistake to avoid is the third pattern: a thin LLM wrapper that pretends to be either. That is the architecture that gets disrupted next quarter when the next frontier model ships and removes whatever differentiation the wrapper claimed.