The Cost-Per-Completed-Task Era

Per-token pricing was the right unit when API calls were single-shot. Is it when your agent runs adaptive thinking, fans out tool calls, spawns sub-agents, and retries on partial failure?

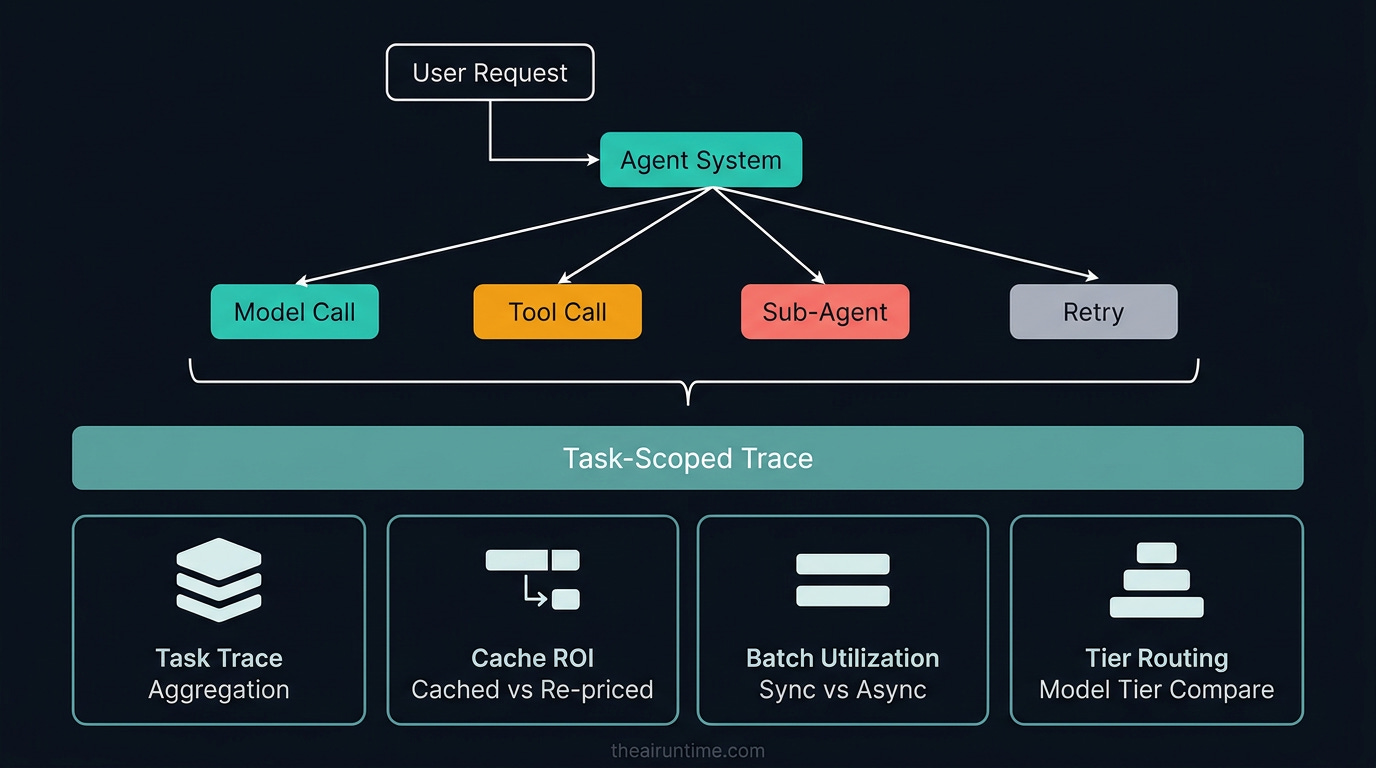

TL;DR - Frontier API pricing is still quoted in dollars per million input and output tokens and the FinOps tooling enterprises are deploying still rolls those numbers up into a “spend per service” view. That view is becoming meaningless. A single user request to a modern agent now triggers adaptive thinking (variable token counts the user did not author), tool calls (which produce more model context, which produce more thinking), sub-agent fan-out (which compounds the first two), and retries on partial failure (which multiply everything by the number of attempts). On the Box deployment Anthropic cited in the Opus 4.7 launch, 56% fewer model calls and 50% fewer tool calls produced lower per-task spend even with a ~1.0–1.35x tokenizer increase. The right unit is cost-per-completed-task (CPCT), measured against an SLO that defines “completed.” Building it requires four instruments most teams do not have yet: a task-scoped trace that aggregates every model and tool call back to a single user-visible outcome, a prompt-cache ROI line that distinguishes cached input from re-priced input, a batch-API utilization line that measures the 50% discount you are or are not capturing, and a model-tier routing line that tells you the per-task delta between your defaults and the next-cheaper tier that would still hit the SLO. Without those four, you cannot make rational economic decisions about effort levels, task budgets, or model upgrades. If your monthly bill went up 40% and traffic was flat, your CPCT is doing something your token graph cannot see.

The metric we kept after we stopped being right

For three years tokens were the right unit. A user typed a prompt, the API returned a completion, the bill totaled the tokens in plus tokens out. Dashboards charted tokens-per-day. SREs alerted on tokens-per-second. Engineering tracked tokens-per-feature. The unit matched the work, and the work matched the user request.

That alignment broke quietly somewhere around 2024 and conclusively by mid-2026. The work a single user request now does is not a token sum — it is a tree. A user asks “review this codebase and propose a refactor plan.” Opus 4.7 with xhigh effort and adaptive thinking enabled runs its own reasoning, calls a file-read tool ten times, calls a grep tool five times, spawns a sub-agent to evaluate one risky change in isolation, retries one tool call that returned an empty result, and emits a structured plan. The token count for that request reflects all of the above; the user only authored the prompt.

The token unit hasn’t gotten less accurate. It has gotten less useful. Two requests that both spent 80,000 tokens can have radically different value: one finished the user’s task cleanly, the other looped on the wrong sub-problem and produced a half-answer that the user had to throw away. Per-token spend cannot tell those two apart. Per-task spend can.

The model providers know this, which is part of why the most architecturally interesting feature in the Opus 4.7 release — covered in detail in Claude Opus 4.7: The Production Engineer’s Breakdown — was task budgets. A task budget is the first time the platform itself has given an agent visibility into its own cost ceiling for a complete loop. The metric the model now optimizes against is the metric finance should have been tracking all along.

Why per-token math breaks for agents

Five factors decouple per-token spend from per-task value, and each pulls in a different direction. The result is that any single token graph hides at least one of them.

Adaptive thinking is variable cost the user did not author. A request with adaptive thinking turned on runs more thinking on harder problems and less on easier ones. That is the design intent. The cost consequence is that an identical input prompt can produce 5,000 thinking tokens on one call and 35,000 on the next, depending on how the model judges the difficulty. Token-per-call distributions widen. Per-token cost trends become noisy in a way the previous generation’s fixed-completion calls were not.

Tool calls produce model context, which produces more thinking. Every tool call returns a payload that enters the model’s context window. A file-read returning 4,000 tokens of source code is now 4,000 input tokens the user did not author. The next model call processes those 4,000 tokens. If the model decides to read another file based on that context, the cycle continues. On agentic coding workloads, tool-result tokens routinely exceed user-prompt tokens by a factor of ten to fifty.

Sub-agent fan-out compounds the first two. When the harness spawns a sub-agent to evaluate one sub-task in isolation, that sub-agent runs its own thinking against its own context window, often with its own tool calls and its own retries. The Hippocratic Polaris 3.0 architecture covered in How Vertical Agents Self-Improve in Production runs a 22-LLM constellation around a primary conversational model. Hippocratic doesn’t bill that way externally, but the internal accounting is non-trivial: a single patient call invokes more than twenty models in coordinated subordination, each charging the harness in its own token budget.

Retries on partial failure multiply everything by the number of attempts. A tool call that 429s and retries doubles the cost of that step. A judge that scores the agent’s output as failing and triggers a re-run doubles or triples the cost of the entire task. Retry policies are good engineering — they are the difference between a flaky agent and a reliable one — but they are also a quiet multiplier on the bill.

Prompt caching and batch APIs introduce two-tiered economics. A token that hits the prompt cache costs roughly 10% of an uncached token on Anthropic’s pricing. A token submitted through batch processing costs 50%. Both are massive discounts, but they only apply to portions of the traffic that fit specific shapes (long stable system prompts for caching, latency-tolerant work for batch). Your bill’s relationship to your traffic now depends on the cache hit rate and the batch utilization, and neither of those is visible from a tokens-per-day chart.

The composite effect: token graphs that look identical can hide cost-per-task that diverges by 3–5x. Token graphs that look like cost spikes can be the system getting more work done per request, not paying more for the same work. Either direction is invisible without CPCT instrumentation.

The four instruments

Building CPCT visibility takes four pieces. Each one is a small engineering investment relative to model spend; none of them require a new vendor.

1. Task-scoped traces

Every model call and every tool call carries a stable task_id that ties back to a single user-visible outcome. A “task” in this sense is whatever the product defines as a unit of completed work: an answered support ticket, a generated PR, a resolved incident, a finalized prior auth decision. The choice of granularity matters less than its consistency.

The trace store aggregates total tokens, total wall time, total cost (with cache and batch tier discounts applied), and outcome status (completed vs. abandoned vs. failed-judge) per task_id. The dashboard reports CPCT distribution, not mean — the long tail of expensive tasks is where the spend hides, and a mean obscures it.

Most observability vendors — LangSmith, Arize Phoenix, Braintrust, Helicone, OpenTelemetry-based custom stacks — already support this pattern. The work is propagating the task_id consistently across every model call, sub-agent spawn, and tool invocation. If a sub-agent does not inherit the parent’s task_id, the rollup is wrong and you will not notice.

2. Prompt-cache ROI line

Prompt caching saves money only on traffic that fits the cache shape: long stable prefixes (system prompts, persistent context, tool catalogs) that recur across many requests. The discount is up to 90% on cached input tokens for most providers’ caching tiers. The trap is that not all of your input qualifies — only the prefix that matches a previously seen and still-warm cache entry.

The instrument is a per-task line that splits input tokens into three buckets: cache hits (charged at the cache rate), cache writes (the cost of populating the cache for the first time), and uncached input (full price). Ratio of hits-to-writes is the leading indicator. Anthropic’s documentation and several third-party analyses are aligned on the rough heuristic: cache writes pay back after roughly two to five hits depending on the cache tier and your traffic shape. If your hits-to-writes ratio is below that, you are paying to populate caches you are not actually reusing — either the cache TTL is too short for your traffic pattern, or the cacheable prefix is not as stable as you assumed.

The reason this line matters at the FinOps level: a 20-point swing in cache hit rate can produce a 30%+ swing in your bill on a stable workload. Without the ROI line, that swing is invisible.

3. Batch-API utilization line

Anthropic, OpenAI, and Bedrock all offer batch processing at 50% of standard rates. The trade is latency: batch responses can take up to 24 hours, so the discount only applies to work that doesn’t need an interactive response. Anyone running periodic evaluations, scheduled report generation, document processing pipelines, or async data transformation is leaving 50% on the floor by running those through synchronous APIs.

The instrument is a per-workload classification: “interactive” vs. “batchable.” Then a utilization line showing what percentage of the batchable category actually routes through the batch API. Most teams that have measured this discover that 20–40% of their total volume is batchable, and significantly less than that fraction is actually being batched.

The migration is unglamorous — moving a job from synchronous API to batch is a queue and a callback — but the savings are immediate and durable. Worth a paragraph in any CPCT report.

4. Model-tier routing line

For every task type in production, there is a “default model” (typically the most capable one the team trusts) and a “would-be-fine cheaper model” (a Sonnet 4.6 against an Opus 4.7, a GPT-5.4 Mini against a GPT-5.4, a Gemini 3.1 Flash against a Gemini 3.1 Pro). The routing line measures, on a sample of tasks, what the CPCT would have been if the cheaper model had handled them, and what fraction of those cheaper-model attempts would have hit the same SLO.

This is the line that tells you whether your defaults are economically rational. Most production agents over-route to the most capable model out of caution and never re-test that assumption against newer mid-tier models. A Sonnet that landed at 70% of Opus capability six months ago may now land at 85% of Opus capability with new model releases — but you won’t notice unless the routing line keeps measuring it.

The NVIDIA NeMo flywheel case referenced in How Vertical Agents Self-Improve in Production — a routing model fine-tuned from Llama 3.1 70B down to a Llama 3.1 8B variant achieving 96% accuracy at 10x cost reduction — is the canonical version of this play. The framework generalizes: every model in your harness has a smaller candidate that’s worth periodically benchmarking.

Where the savings actually hide

With the four instruments in place, four categories of saving become visible, in roughly the order of return-on-effort.

Prompt caching, when it fits. The fastest dollar-saver in a CPCT-instrumented system is usually fixing the cache hit rate. The system prompt that varies by user (because someone interpolated a username into it) is invalidating the cache and quintupling input cost on every call. The fix is moving the variable content out of the cached prefix. A two-line change in most agent frameworks; a 30% bill cut on cached-heavy workloads.

Batch API utilization on the work that can wait. Every workload classified as batchable but running synchronously is 50% off the table. Migrate them. Less glamorous than the others; pays the most steadily.

Model cascading and tier routing. Once the routing line is measuring it, the cases where the cheaper model would have hit the SLO become a list of work to migrate. The migration is gradual — route 10%, then 25%, then 50% — and the SLO is the abort condition. The discipline is treating the cheaper model as a candidate, not a downgrade, and letting the SLO data make the decision.

Effort tuning, task budgets, and harness optimization. The Box deployment cited in the Opus 4.7 piece — 56% fewer model calls and 50% fewer tool calls — is the genre of saving that comes from harness work, not from a model swap. Lowering effort by one tier on tasks where the SLO doesn’t require the higher tier. Setting a task budget that constrains the loop to a known token allowance. Modifying the system prompt to discourage over-thinking on simple subtasks. These are unglamorous individually; cumulatively they often produce the largest single savings in a mature CPCT program.

The pattern across all four is that the savings come from instrumenting the decisions you were already making, not from heroic re-architecture. The teams that pay the most for AI in 2026 are the teams that have not measured the four lines above.

The accounting question nobody is ready for

FinOps for AI is being built right now, mostly by adapting existing cloud FinOps practice. The adaptation is imperfect in one specific way: cloud FinOps was built around resources with well-defined units (vCPU-hours, GB-months, request counts) and reasonably stable cost-per-unit-of-work ratios. AI workloads have neither.

The question the CFO will eventually ask the head of engineering is some version of “our monthly AI bill went up 40% and our user-facing traffic was flat — what happened?” In a token-only world, the engineering team has to answer in token terms: more thinking per call, more tool calls per task, more retries, a tokenizer change. In a CPCT-instrumented world, the engineering team can answer in business terms: cost per completed support ticket rose 12%, cost per generated PR fell 25%, cost per resolved incident was flat. The first answer makes the CFO nervous. The second answer makes the conversation about which workloads merit the investment.

Three of the operational maturity moves covered in earlier issues map onto this:

The Model Reliability Engineering discipline gives you the SLO that defines “completed.” Without an SLO, “completed” is subjective and CPCT is meaningless.

The Eval Lifecycle gives you the judge that decides whether a task counted as completed. Without the judge, the outcome status field in your task-scoped trace cannot be filled.

The Shadow AI Agents / agent identity work gives you attribution. Without it, your CPCT rollup cannot answer “which team’s traffic drove the change.”

CPCT is the metric that unifies them at the financial layer. It is what makes the reliability investment defensible to the budget.

Build order

The instruments stack in a specific sequence, and skipping any of the early ones makes the later ones unreliable.

Define a task. What is the user-visible unit of work that counts as completed? Resolved ticket, generated PR, processed document, finalized decision. Pick one per product surface; resist the urge to nest task definitions before the primary one is working.

Plumb

task_idthrough every model call, tool call, and sub-agent. This is the work. Done correctly, every span in your trace store rolls up cleanly. Done incompletely, sub-agent traffic shows up as orphaned spend.Add the cost columns to the rollup. Per-task: total tokens (split into cached / cache-write / uncached / batch), total wall time, total model spend, total tool spend. Outcome status (completed / abandoned / failed-judge). Provider and model used.

Define CPCT and chart its distribution. Mean is the seductive metric and the wrong one. P50, P90, P99 are the metrics that surface the long-tail tasks where the spend hides.

Build the cache ROI, batch utilization, and tier routing lines. Each is a derived view of the same trace store. None require new instrumentation if step 2 was done right.

Set per-product CPCT targets. Treat them as SLOs. The product owner and finance jointly own the budget; engineering owns the implementation.

Connect to the harness improvement loop. When CPCT exceeds the target on a given task type, that task type is a candidate for the next harness iteration described in How Vertical Agents Self-Improve in Production. The cluster of expensive tasks is a failure cluster in cost terms.

None of this requires a new vendor. All of it requires consistency in trace propagation and a small amount of FinOps glue code. The teams that have done it talk about CPCT the way DevOps teams talk about p99 latency: a north-star metric that aligns engineering, product, and finance on the same view.

Bottom line

Per-token pricing remains the unit the providers bill in. Per-task cost is the unit the business runs on. Closing the gap between those two is the unglamorous infrastructure work that will define which AI products stay profitable in 2026 and which ones quietly turn into loss leaders.

The four instruments — task-scoped traces, cache ROI, batch utilization, tier routing — are mostly engineering hygiene on top of trace data you already have. None of them require a model upgrade. None of them require a new vendor. All of them require deciding that “tokens-per-day” is no longer the chart you optimize against.

The next wave of frontier model releases will likely keep the per-token headline number flat while adjusting tokenizer efficiency, effort behavior, and thinking economics. The bill will move; whether your bill moves up or down depends on whether you can read it at the task layer.

Pick a task definition this week. Plumb the task_id next week. The four lines follow.

Related from The AI Runtime:

Claude Opus 4.7: The Production Engineer’s Breakdown — task budgets, tokenizer change, the cost framing this article extends

How Vertical Agents Self-Improve in Production — the harness improvement loop and the data flywheel case

The Eval Lifecycle: What Actually Happens Between “Proof of Concept” and “Production” — the judge that decides whether a task counted as completed

Model Reliability Engineering: Who Owns It When the AI Is Confidently Wrong? — the SLO discipline that defines completion